AutoML was built to make machine learning easier and, in many ways, it has. By automating model selection, hyperparameter tuning, and elements of the training process, AutoML platforms have helped teams accelerate development and reduce the complexity of building models from scratch. For organizations getting started with AI, these tools can dramatically shorten the path from data to a working model.

But there’s a problem. The part of AI that AutoML optimizes is no longer the part that determines success. In production AI environments, the challenge isn’t building a model. It’s getting that model to run reliably, efficiently, and within the constraints of real-world systems. This is where the limitations of AutoML are visible.

What AutoML Actually Optimizes

Most AutoML platforms are designed to answer a specific question: “What is the most accurate model we can train on this dataset?”

That’s a useful question. Accuracy and training efficiency still matter, but neither of these factors alone determine whether a model will succeed once it leaves the development environment.

AutoML systems typically operate within controlled, cloud-based environments where compute is abundant, memory is flexible, and infrastructure is designed to support experimentation. Within these conditions, models can be trained, evaluated, and iterated quickly.

What’s often missing from this process is any meaningful connection to the environment in which the model will ultimately run. As a result, AutoML tools can produce models that perform well in training but have no inherent alignment with the constraints of the target inference environment. The model may be mathematically sound and highly accurate, but that doesn’t guarantee it will execute effectively—or at all—once deployed.

Where AutoML Breaks Down in Production

An AI model that performs well in a cloud environment can fail completely when moved into production. This happens more often than teams expect. It’s a common outcome of development workflows that prioritize training performance without accounting for deployment realities. When models are introduced into real systems, a different set of constraints begins to shape how they behave.

Memory availability may be significantly lower than in the development environment, and latency requirements may be strict enough that even small inefficiencies become problematic. Power consumption must also be tightly controlled—especially in edge and embedded environments—and differences in hardware architecture can directly impact how models execute at runtime.

These constraints are fundamental to any working AI powered device. When they’re introduced late in the process, teams often discover that a “finished” model is not actually ready for production. Instead, it becomes the starting point for another phase of engineering work.

The Hidden Cost of AutoML: Deployment Becomes the Problem

AutoML doesn’t eliminate complexity—it shifts it downstream. By optimizing for training outcomes, AutoML can accelerate the early stages of development by deferring the hardest problems until later. Once an AI model is handed off for deployment, engineering teams must adapt it to the constraints of the target system.

This can involve multiple layers of additional work:

● Modifying model architectures to fit within memory limits

● Optimizing inference performance to meet latency targets

● Adjusting compute behavior for specific hardware platforms

● Iterating through deployment pipelines to resolve runtime issues

These steps are rarely straightforward. Small changes in hardware, software environments, or system requirements can trigger new rounds of optimization and testing. What initially appeared to be a streamlined development process becomes a fragmented workflow with repeated handoffs and feedback loops.

Even when a model successfully runs on target hardware, ensuring consistent and correct predictions introduces additional challenges. Small differences in execution environments or low-level implementations can impact output.

In practice, this leads to longer timelines, increased engineering overhead, and greater uncertainty around whether a model will ultimately succeed in production. As teams attempt to make models viable across different deployment environments, the cycle repeats.

Production AI Is a Systems Problem, Not a Modeling Problem

The core issue is not that AutoML is ineffective, but that it’s solving a different problem than the one production AI requires. Building a model is only one part of the system. In production, that model must operate within a broader environment that includes hardware constraints, system architecture, and real-world operating conditions. These factors define the feasible solution space for the model itself.

This is why production AI increasingly requires a systems-first approach. Instead of asking, “What is the best model we can train?” teams need to ask: “What is the best model that will actually run in this environment?”

That shift changes how AI systems are designed. Constraints are no longer treated as downstream considerations. They become inputs into the development process from the beginning.

How ModelCat Takes a Different Approach

ModelCat approaches AI production from the perspective of deployment success rather than training performance. Instead of optimizing models in isolation, ModelCat builds models in the context of the environment in which they will run.

Instead of relying on a single training pass, ModelCat runs thousands of experiments to explore the entire solution space. The system uses AI to generate candidate models, tests them on real silicon and SDKs in the target production context, and iterates continuously until it identifies models that meet the user’s requirements.

Over time, ModelCat builds an accumulated understanding of what works best —and worst— across different problems, datasets, and hardware environments. This “known successes” baseline allows the system to start from informed starting points—rather than from scratch—significantly reducing time to success while producing higher-quality, deployment-ready models.

This approach incorporates an understanding of the:

● Characteristics of the target hardware, including memory, compute capabilities, and hardware acceleration paths

● Structure and behavior of the user’s dataset, including how it compares to previously learned datasets

● Requirements of the specific use case, including performance, latency, and reliability expectations

● Knowledge derived from thousands of model iterations across different problems and environments

● New architectures and training techniques through continually evaluating the well-spring of open source research

By combining these inputs, ModelCat can explore the solution space more effectively and identify architectures that are not only accurate, but also executable within the constraints of the target environment. The system also develops an understanding of how individual model fragments perform, allowing it to construct novel architectures by combining the most effective elements to solve a given problem.

This means teams can benefit from the latest advances in machine learning without needing to manually evaluate new research, integrate new frameworks, or redesign models for each deployment scenario. The system applies those advancements automatically within the context of real-world requirements.

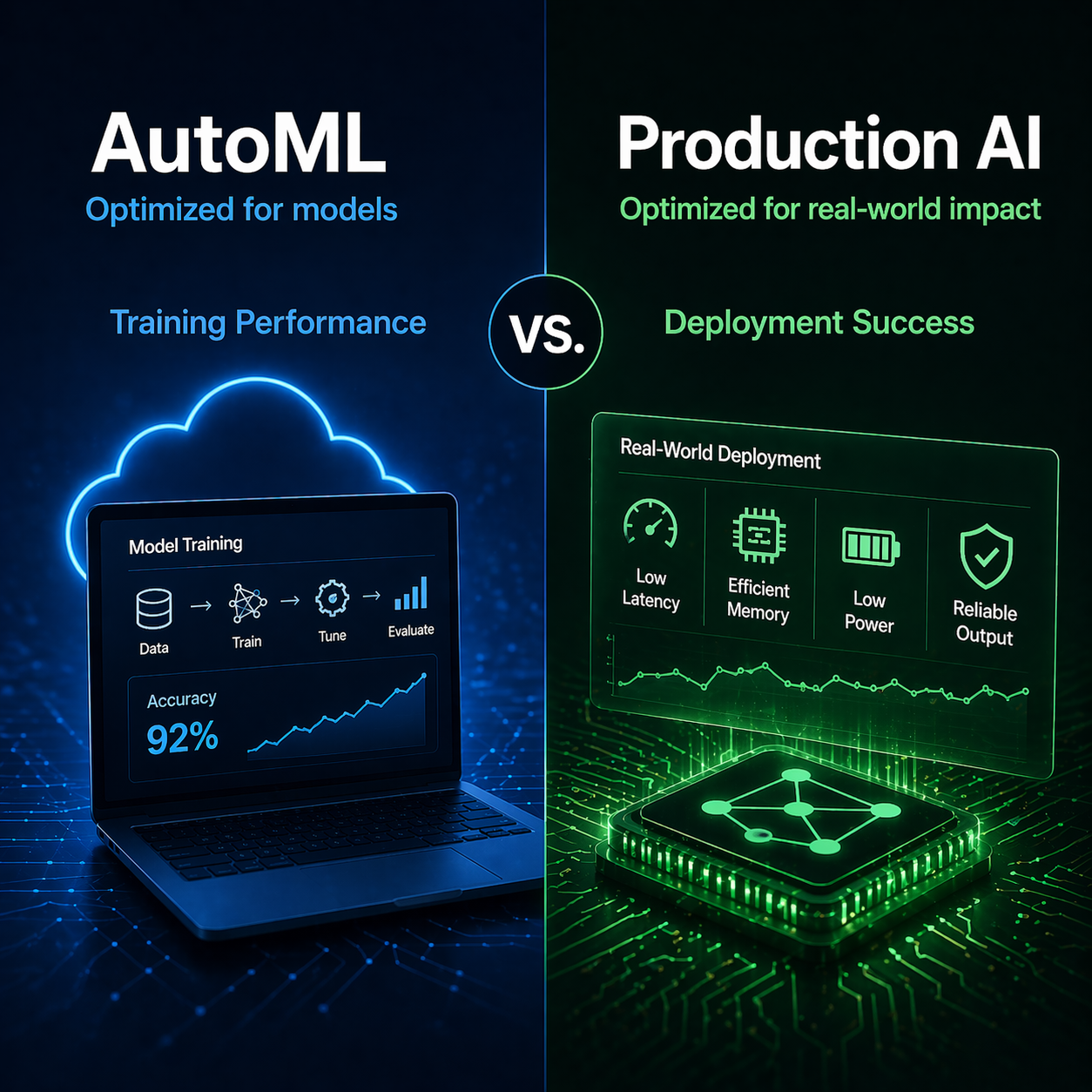

The Key Difference: Training Performance vs. Deployment Success

The distinction between AutoML and ModelCat can be summarized simply: AutoML optimizes for training performance, while ModelCat uses AI to optimize for deployment success.

Most AutoML approaches are fundamentally human-in-the-loop systems. They automate parts of model training, but still rely on predefined workflows and manual intervention to adapt models for deployment. In that sense, they assist in building AI, but are not themselves AI-powered systems.

ModelCat takes a different approach. It uses AI to generate, evaluate, and refine models autonomously—running large numbers of experiments, learning from outcomes, and continuously improving its ability to solve new problems. This allows ModelCat to go beyond assisting development and instead function as an intelligent system for building production-ready AI.

Why This Shift Matters Now

As AI moves beyond centralized infrastructure and into edge devices, embedded systems, and diverse hardware environments, the gap between development and deployment is becoming more pronounced.

Organizations are no longer building AI systems for a single, stable environment. They’re building systems that must operate across multiple devices, processor architectures, and performance tiers. In these contexts, the ability to train a model is no longer the limiting factor.

Instead, the limiting factor is whether that model can run. This is why approaches that focus exclusively on model training are becoming insufficient. The complexity of modern deployment environments requires an AI-driven approach that can account for the full system, not just the model. At this scale, the number of variables, constraints, and interactions quickly exceeds what manual workflows can realistically manage.

Building AI That Actually Runs

AutoML has played an important role in making machine learning more accessible, but as AI systems move into production environments, accessibility alone is not enough. The models that succeed in production are not simply the ones that train well. They’re the ones that can operate reliably within the constraints of real systems.

That requires a shift in perspective—from optimizing models in isolation to designing systems that work in practice. ModelCat is built around that shift, using AI to generate, evaluate, and refine models against real-world constraints.

That matters because in production AI, building the model isn’t the hardest part. Getting it to run is.

If you’re evaluating AutoML approaches and looking for a path to production-ready AI, ModelCat can show you how it works in practice. Contact our team to discuss your project or schedule a test drive to see how ModelCat uses AI to generate, optimize, and validate models against real-world constraints from the start.